By now you’ve probably heard of the deepfake phenomenon where a person in an existing image or video is replaced with someone else’s likeness. While you might think this is just a digital version of the old dye-your-hair and wear-a-fake-mustache ploy, there are important nuances here. Most importantly, deepfakes leverage machine learning and AI to manipulate audio/visual content in real time, and this technology can be leveraged to scale—potentially saving crooks thousands of dollars on hair dye and fake mustaches 😉.

Seriously though, this has all led to a recent FBI warning about how deepfakes are now being used to interview for and get remote jobs. The scam works like this: A US citizen has important personal info stolen as a result of a phishing attack. Photos of this person are then easily found online (Facebook, LinkedIn, etc.), and that’s all that’s really needed to perpetrate the job interview deepfake. The job application is filled out remotely with the stolen info and the actual video interview is conducted via deepfake.

Know More:

What’s the motive here? On the more innocent side of the spectrum, the perpetrator may want to be paid in US dollars. On the more nefarious side, the new fake employee could infiltrate various systems via employee-only access and do near limitless harm.

So, you may be thinking now, ‘I would never fall for some fake video interviewee. I know what real people look like vs Max Headroom.’ Yes, this is true. Most deepfakes still look very fake, and the AI can’t penetrate most basic interview questions, but this is not to say that a combination of improving technology and lazy interviewers won’t make deepfakes a much greater threat in the future. Plus, the fact that these deepfakes can be done at scale where hundreds of fake interviews are conducted a day make this mere numbers games for most cyber crooks. Just like any phishing/whaling scheme, it’s only a matter of time before someone gets hooked and reeled in.

Another point the FBI makes is that smaller businesses are unlikely to be targeted because their data stores are fairly limited. Interestingly, larger enterprises are also not prime targets because of their multi-step hiring processes and background checking. The most at-risk for these deepfake attacks are tech startups and SaaS companies that have access to lots of data but comparatively little security infrastructure.

Seeing as phishing/whaling attacks are such a key component of these deepfake scams, I thought I’d reiterate that back in February, RPost Launched the RSecurity Human Error Protection Suite to tackle growing threat of business email compromise and wire fraud.

According to a recent report by Osterman Research (click to download), business email compromise (BEC) attacks — sophisticated email phishing schemes — are among the fastest growing and most concerning cybercrimes against organizations. These schemes pry lose the personal info necessary to build a deepfake profile that could be used in a bogus job interview, which, if the interview results in a job, could lead to further security compromises.

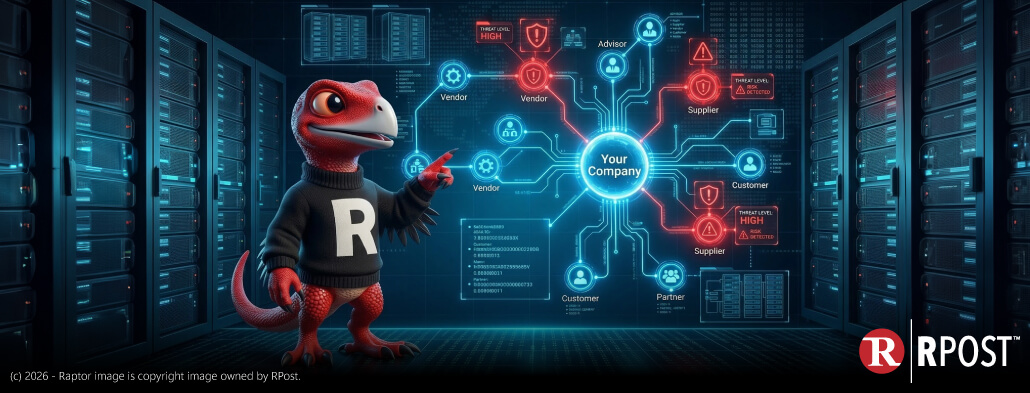

The RMail RSecurity human error prevention suite is like kryptonite to deepfake scams with its AI-triggered encryption and wire fraud protection, in-the-flow email security user training, and suite of anti-whaling BEC protections (deep-learning-enabled recipient verification, domain age check, impostor alerts, Double Blind CC™, and Disappearing Ink™). This suite adds critical layers to not only protect externally-facing business executives and their organizations but also those newfound human targets in HR and finance teams, especially those in smaller, leaner, newer organizations.

Contact us to discuss how you can get started with RMail and the Human Error Prevention Suite today.

March 27, 2026

March 20, 2026

March 13, 2026

March 06, 2026

February 27, 2026